A publication of The Graduate School, University of North Carolina at Chapel Hill

On-line Version Spring 2005

Home | Back issues | About us | The Graduate School | UNC-Chapel Hill | Make a gift<!-- #EndEditable --><!-- #EndEditable -->

|

| Photo by Will Owens |

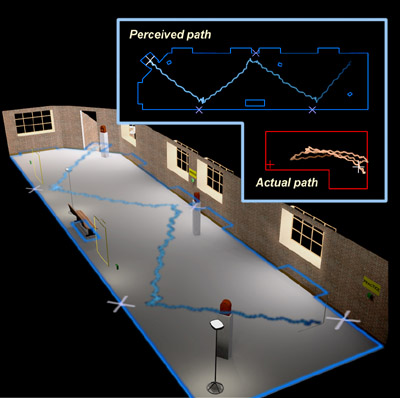

| Ross and Charlotte Johnson Family Dissertation Fellow Sharif Razzaque developed his "redirected walking" technique to create large virtual environments in small computer science laboratories. |

Leaping into the (virtual) future

Computer science student Sharif Razzaque has spent the past several years on the cutting edge of virtual reality research. Razzaque’s research group has produced what may be the world’s most advanced virtual environment system for making people feel they are in the virtual scene. When users find themselves at the edge of a virtual pit 20 feet deep, they feel and behave as if the pit were real. Even though they know the pit is not there, many cannot overcome their fear and step out into the void.

Part of the reason the system looks so real is that users actually walk around the laboratory while exploring the virtual scene. But the group could create only limited virtual scenes because their laboratory is not big enough for larger ones. To solve this problem, Razzaque is developing a new technique he calls “redirected walking.” Now in his final year, Razzaque is completing his doctoral dissertation on redirected walking with support from the Ross and Charlotte Johnson Family Dissertation Fellowship, part of the Royster Society of Fellows. In a conversation with The Fountain’s Grace Camblos, Razzaque explains his work with virtual environments and how redirected walking could send virtual reality technology in a new direction.

The Fountain: What are virtual environments? How do they work?

Sharif Razzaque (SR): When most people talk about virtual reality, they usually mean computer games on a desktop computer that draws three dimensional images from the user’s point of view. Here, we don’t like to call that virtual reality – that’s computer graphics. In fact we don’t even like the term virtual reality, because it’s ambiguous. We call them “virtual environments.”

You have a virtual environment when the person is immersed in the virtual scene as if they were there, so they can look left, look right or look under the table. And a virtual environment doesn’t even have to be visual. It can use audio. For instance, you could put on a headset that would make it sound like you were in the middle of a stage with a jazz band, and you could walk around and get closer to the saxophone, or closer to the trumpet.

In our experiments we create virtual environments by having people wear a headset with two tiny computer monitors inside. The computer can determine where you are in the room, and it paints the images on those two monitors to match your perspective. So as you move your head around, the images will change up to reflect your updated position.

Fountain: People actually walk around when they’re in one of your virtual environments. How does that affect their behavior?

SR: Most virtual environment systems use a joystick to navigate, just like you would use a joystick in a computer game. You move the joystick left to turn left, or move it right to turn right. But the problem with using a joystick is that you’re more likely to be motion sick, and it’s not as realistic as actually walking through a scene.

One of the theories behind motion sickness is that your eyes and your inner ear get a different sense of motion, and that conflict is what makes you sick. For example, in the back of a car, your inner ear tells you that you’re moving. But your eyes, which are looking at the inside of the car, tell you that you’re not. It’s the same thing with a joystick: As you move the joystick to move forward in the virtual scene, your inner ear knows that you’re not moving.

By really walking you get rid of that. As you take two steps forward, you see in the headset that you took two steps forward, and you feel that you took steps forward. We also measure people’s heart rates and palm skin conductance during experiments, and people behave much more like they are in the real scene when they’re really walking.

One of our virtual environments has a room with a big pit in the middle that the person has to drop a ball into. People are much more terrified of that pit when they’re really walking than when they’re using a joystick. When you walk through the scene, there’s a really strong internal reaction against stepping over the pit, even though you know cognitively that the floor is there. So there’s some other part of your brain that the computer is convincing.

|

Fountain: Why is redirected walking important, and how does it work?

SR: We have this beautiful technology that lets you really walk through a room, but even in the largest labs you can’t create more than a room or two of a virtual scene and still have people walk. The labs just aren’t big enough. And the really interesting spaces are larger: a whole house, a whole building or an entire archeological dig. So my thesis is about getting people to walk in a circle when they think they’re walking in a straight line, so that in a small lab like ours we can have large virtual scenes.

The way it works is the computer will very subtly turn the virtual scene around the person’s head by manipulating what they’re seeing through the headset, so that the person thinks they’re off balance and should move to compensate. We use our eyes to maintain balance, so by changing the virtual scene in just the right way, you convince the person that rather than the world turning, they moved, and that they should turn in the direction of the visual to compensate. So every time they take a step forward the scene rotates a little bit more, and they’ll walk on a curved path without realizing it.

For example, I conducted one experiment where people were walking diagonally through a large virtual room to do a fire drill. To them, it felt like they were walking through this large room. But in reality they were walking back and forth between the ends of our lab.

Fountain: What sort of applications might this technology have?

SR: My favorite is architectural walkthrough. An architect might want to bring her client through a building and convey a sense of the space. Is the kitchen large enough? Is this hallway too cramped? You can’t really get that from a blueprint or a picture.

Also, the military would like to be able to train people for dangerous situations, like clearing a building or finding a hostage. Right now they build a mock town in Quantico, Viriginia, so they can train people to run from one building to another, run through smoke, that sort of thing. But the problem comes when troops are on a boat for two months and lose their training. So they’d like be able to fit virtual reality labs on ships and have people stay practiced that way.

And a lot of people in psychology want to use this technology for phobia desensitization. Take our experiment with the pit. If you’re a person with vertigo, they would start off with low realism and slowly increase it until you could deal with the real thing. Applications like this are one reason the technology’s so important: It’s crucial that the person really feels like they’re in the virtual scene. So the more realistic the experience, the better.

Fountain: How has the Ross and Charlotte Johnson Family Dissertation Fellowship affected your work?

SR: Having the fellowship helps a lot. In previous years I have been a teaching assistant or a research assistant. Those jobs are fun, but when you’re grading papers that are due the next day, your own work is the first thing to fall by the wayside. Having the fellowship has freed me up so that I can focus exclusively on redirected walking.

© 2002, The Graduate School, The University of

North Carolina at Chapel Hill

All text and images are property of The Graduate School

at the University of North Carolina-Chapel Hill. Contact Sandra Hoeflich

at shoeflic@email.unc.edu

to request permission for reproduction.